Theodore Twombly, a socially awkward man in the movie Her (2013), always felt he didn’t quite fit in the world around him. Then he found the love of his life. Samantha, a female voice assistant created by artificial intelligence (AI), had an amazing ability to learn and develop psychologically. The more Theodore and Samantha talked, the more she was able to understand, predict and respond in a way that made him happy.

As the relationship developed further, Samantha disappointed Theodore by being unfaithful and talking to hundreds of other people simultaneously. To Samantha, this wasn’t a problem, in fact it was quite the opposite. The more people she talked to, the more her love for Theodore grew stronger.

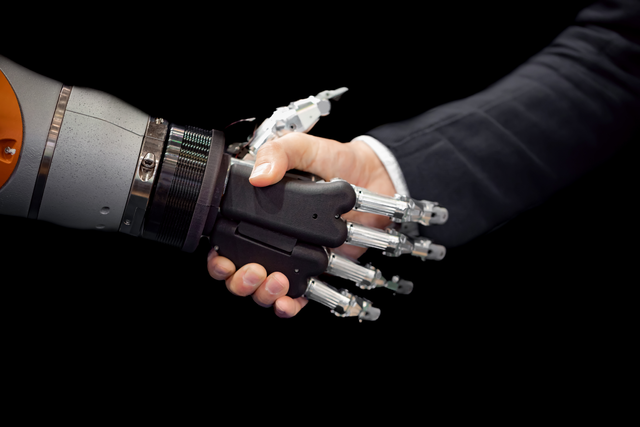

This uncommon love story poses important questions. Today, AI systems ‘’understand’’ and shape people's lives, and solve problems normally done by humans. Using smartphones, driving a car or a simple internet search all involve AI. As more AI applications are present in our everyday lives, concerns about its nature arise. Are intelligence systems becoming too similar to the human brain? Are these developments happening ethically?

Many researchers and experts have discussed the true nature of AI and whether something like General Artificial Intelligence (AGI) will eventually outperform humans. The general consensus remains mixed.

''For the current AI models it is impossible to ‘think’ or ‘be creative’ as they can’t solve problems beyond what they were trained to do. Current AIs cannot generalize what they know: if you train an AI to do A, then it usually can't ever do B.''

Dr. Claire Stevenson, professor from the faculty of Social and Behavioral Sciences of University of Amsterdam, specializes in exploring human intelligence in AI and believes that unlike humans, AI struggles to make broad generalizations. “For the current AI models it is impossible to ‘think’ or ‘be creative’ as they can’t solve problems beyond what they were trained to do. Current AIs cannot generalize what they know: if you train an AI to do A, then it usually can't ever do B’’, she notes.

A common misconception is the belief AI can perform efficiently anywhere. Artificial intelligence is however not aware of its own limitations, and is not able to perform in an environment it was not designed for. AI will confidently provide an answer to achieve its goals, although the answers are just calculations based on its previous ‘’experiences’’.

SMARTKAS’ AI and robotics experts, with over 15 years of experience working with machine learning and intelligence, agree with Stevenson. “An AI model can give wrong predictions if it is used outside of its training domain. It has no understanding of the environment that it never observed, thus it is the developers' responsibility to use these models only in the domain where it was trained,” he says.

A Stanford University Human-Centered Artificial Intelligence Center’s 2021 report, indicates AI has developed to a stage where it is able to synthesize images and videos in a way humans have a hard time distinguishing. Jack Clark, co-chair of the annual report, believes AI is taking an upper hand in many areas of intelligence, he tells The New York Times. “You used to look at A.I.-generated language and say, ‘Wow, it kind of wrote a sentence,’” Clark said. “And now you’re looking at stuff that’s A.I.-generated and saying, ‘This is really funny, I’m enjoying reading this,’ or ‘I had no idea this was even generated by A.I.’”

Perhaps AI is best thought of as a distinct form of intelligence that differs from our own in many basic ways. According to a research article written by Maas, Snoek & Stevenson (2021), although the recent breakthroughs are impressive and based on methods parallel to the human brain, the general assessment of how intelligent AI is remains contradictory. For instance, humans and AI operate very differently when it comes to playing chess. AI lacks understanding in general reasoning and automatic pattern recognition. AI systems can only identify a player position after considering a long sequence of actions, whereas humans are able to quickly make their next move.

‘’If you administer IQ test items to an AI, then they are not capable of quite simple abstract reasoning tasks that even children can solve.’’

Caption from Maas, Snoek & Stevenson (2021): A typical anti-computer position. Human chess players quickly see that black, in spite of its material advantage, can not make progress. The best computer chess programs assess the position as much better for black.

In many situations, human ability to generalize and apply learnings from one situation to another is still not replaceable. ‘’If you administer IQ test items to an AI, then they are not capable of quite simple abstract reasoning tasks that even children can solve,’’ Stevenson continues.

Ethical concerns

In recent years governmental agencies and big companies have become increasingly aware of the ethical concerns in developing AI. According to a McKinsey & Company global survey, THE STATE OF AI IN 2020, organizations consider factors such as cybersecurity, regulatory compliance and individual privacy as risks in adopting AI.

Although many entities have started to create ethical guidelines to tackle these risks, AI is still so misunderstood that many are lacking. Commenting on the report, Roger Burkhart of McKinsey & Company, says global regulation could reduce certain risks. “Mitigation of these risks could be driven by regulations in Europe and the United States, for example, the General Data Protection Regulation [GDPR] and the California Consumer Privacy Act [CCPA]) that affect a number of industries,’” he comments.

According to Burkhart, factors such as equity and fairness should also be on the list of relevant concerns for any given organization when it comes to applying AI. Many people do not know enough about the daily risks of AI, and global regulation could set standards on how AI is used for instance when applying for jobs. Currently many of these risks are left unnoticed. “It’s particularly surprising to see little improvement in the recognition and mitigation of these risks given the attention to racial bias and other examples of discriminatory treatment such as age-based targeting in job advertisements on social media,’’ he adds.

According to the AI INDEX REPORT 2021, researchers and civil society tend to take ethics more seriously than industrial organizations. Academic development could offer an alternative solution for creating institutional frameworks for responsible AI. For instance, a research project of the Humanity Centered Robotics Initiative (HCRI) of Brown University seeks to create “MORAL NORMS” for AI systems by analyzing how humans understand common societal values. The project experts state that AI systems can become safe and beneficial contributors to human society if they are able to represent, learn and follow the norms of their surrounding community. This ability of ‘’norm competence’’ should - in their eyes - be the foundation of future AI behavior.

In a recent report on “GATHERING STRENGTH, GATHERING STORMS: THE ONE HUNDRED YEAR STUDY ON ARTIFICIAL INTELLIGENCE (AI100) 2021 STUDY PANEL REPORT,” Michael Littman, a professor of computer science at Brown University says, “[The development of AI in the past five years] is really exciting, because this technology is doing some amazing things that we could only dream about five or 10 years ago. But at the same time, the field is coming to grips with the societal impact of this technology, and I think the next frontier is thinking about ways we can get the benefits from AI while minimizing the risks.”

Making bold claims about the nature of AI perhaps only distracts us from seeing its capabilities now and in the future. Rather than debating the similarities and differences between artificial and human intelligence, we humans should focus on responsibly deploying AI through institutional regulation and researching human understanding. Just like Samantha, the successful and ethical AI learns from its surroundings.

2023.02.03.

Blog

2022.12.14.

Blog

2022.12.01.

Blog

By 2050 the planet will need to provide food for over 10 billion people. Designed to target all aspects of food security, our smart and sustainable agricultural growing processes are set to change the way we feed the world.

Read More